PlenOctrees

For Real-time Rendering of Neural Radiance Fields

ICCV 2021 (Oral)

- Alex Yu 1

- Ruilong Li 1,2

- Matthew Tancik 1

- Hao Li 1,3

- Ren Ng 1

- Angjoo Kanazawa 1

- 1 UC Berkeley

- 2 USC ICT

- 3 Pinscreen

We propose a framework that enables real-time rendering of Neural Radiance Fields (NeRFs) using plenoptic octrees, or "PlenOctrees". Our method can render at more than 150 fps at 800x800px resolution, which is over 3000x faster than conventional NeRFs, without sacrificing quality.

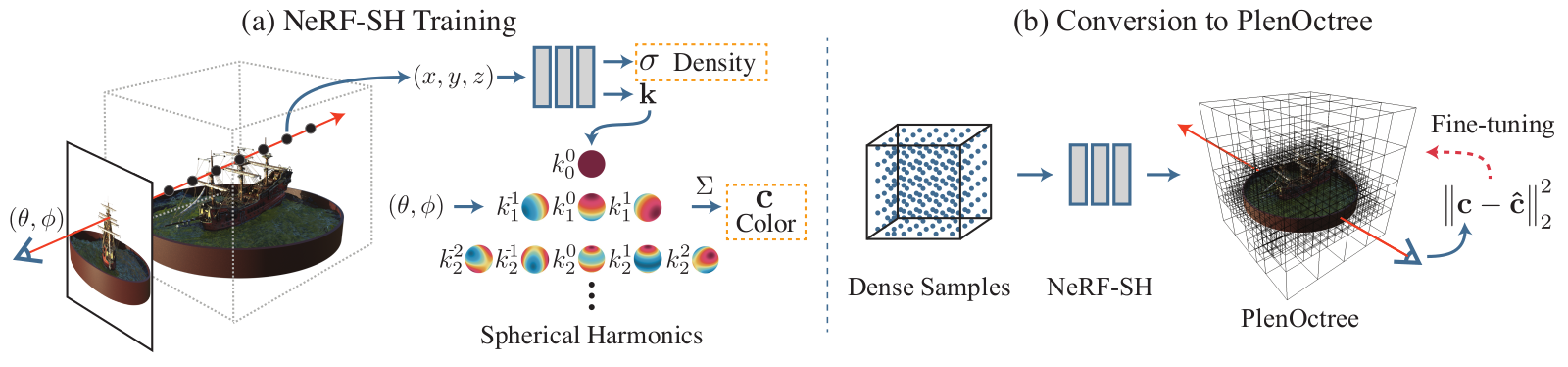

Real-time performance is achieved by pre-tabulating the NeRF into an octree-based radiance field that we call PlenOctrees. In order to preserve view-dependent effects such as specularities, we propose to encode appearances via closed-form spherical basis functions. Specifically, we show that it is possible to train NeRFs to predict a spherical harmonic representation of radiance, removing the viewing direction as input to the neural network. Furthermore, we show that our PlenOctrees can be directly optimized to further minimize the reconstruction loss, which leads to equal or better quality than competing methods. We further show that this octree optimization step can be used to accelerate the training time, as we no longer need to wait for the NeRF training to converge fully. Our real-time neural rendering approach may potentially enable new applications such as 6-DOF industrial and product visualizations, as well as next generation AR/VR systems.

Real-time Online Demo

We're excited to present a live demo that works in modern browsers. Click on one of the scenes below to open the demo app.

Note: Our full models are on the order of 2GB in size; for online viewing, the PlenOctrees used are lower resolution, quantized versions of 34-125MB, losing approximately 0.5-1.5 dB in PSNR.

BibTeX

Concurrent Real-time NeRF Rendering Work

Recently, several competing fast NeRF rendering papers have been released. Please also check out their amazing work.

- FastNeRF by Garbin et al.

- SNeRG by Hedman et al. (has online demo)

- KiloNeRF by Reiser et al.

Acknowledgements

We thank Vickie Ye and Ben Recht for help discussions, Zejian Wang of Pinscreen for helping with video capture, and BAIR commons for an allocation of GCP credits. This website is in part based on a template of Michaël Gharbi.